Cybersecurity

Artificial intelligence is being applied in industrial controls, but manufacturers need to know where their data is processed and who has access to it.

Control AI Access

in the OT Environment

By Wayne Labs

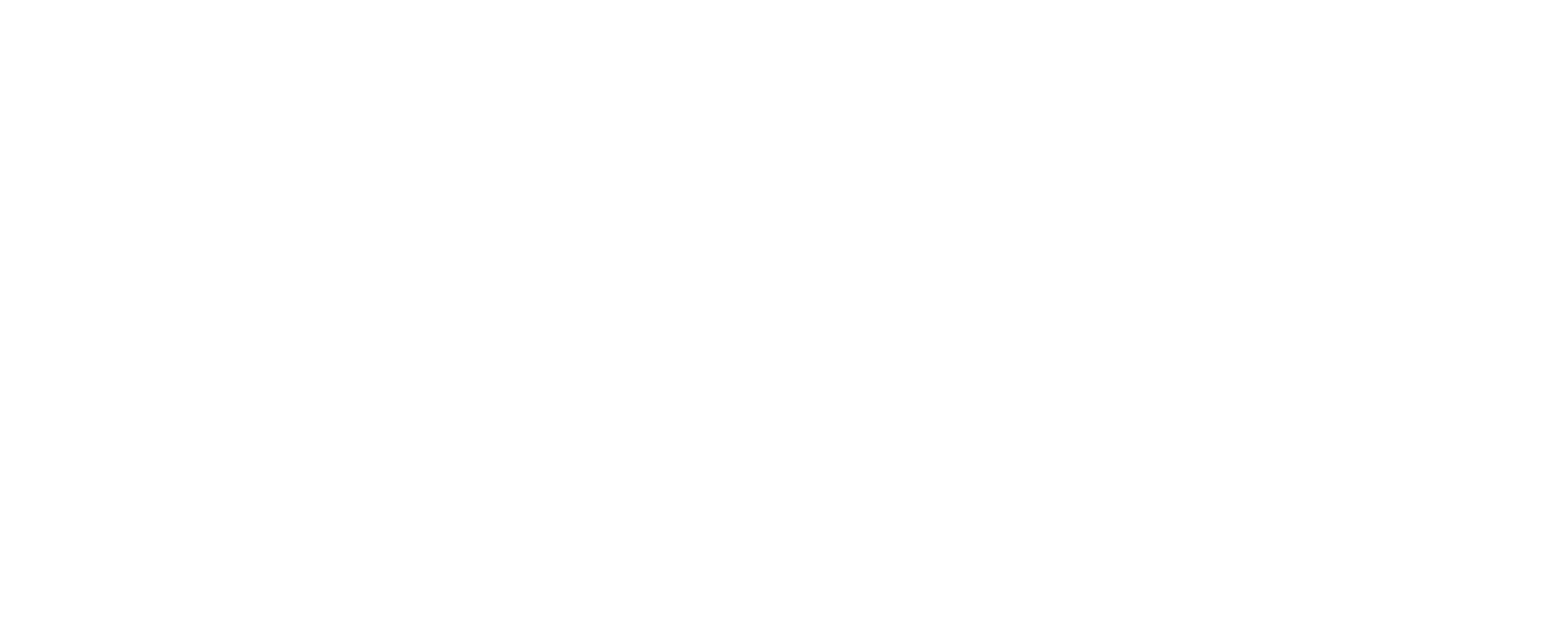

Photo courtesy of akinbostanci / Getty Images

Today, artificial intelligence (AI) seems ubiquitous. It’s in your business systems. It’s being layered into cybersecurity systems and can catch interlopers on your network faster, but — not to be outdone — the criminals have also discovered the benefits in using AI to perfect their craft and hacking tools. For example, using generative AI, writing a convincing phishing email can be reduced from a 16-hour project to five minutes.

DCSs and SCADA systems are now embedding AI to help solve process control problems, which has been a major improvement in fine-tuning production efficiency, but if improperly applied could be a cybersecurity threat. And, sometimes well-meaning employees take it into their own hands to experiment with AI from outside internet sources — like ChatGPT — to “improve” control system performance and maintenance. But what happens to your data once it is mulled over by ChatGPT or another public AI tool?

Is Your AI Secure?

Certainly, the use of AI in software applications has its advantages, but an IBM cybersecurity study titled “Cost of a Data Breach Report 2025” notes that 13% of responding organizations reported breaches that involved their AI models or applications. However, among those 13% reporting breaches, almost all of them (97%) lacked proper AI access controls. The most common of these security incidents occurred in the AI supply chain through compromised applications, application programming interfaces (APIs) or plug-ins. The study’s findings suggest AI is emerging as a high-value target.

While these attacks occurred at the enterprise level, manufacturers need to take a second look at the OT level where industrial applications are using embedded AI.

As AI becomes embedded in industrial environments, organizations need to treat these capabilities as part of the broader cyber-physical attack surface, says Sean Tufts, Claroty field CTO. Systems such as SCADA and DCS were not originally designed with modern AI components or external integrations in mind, so incorporating AI requires additional security considerations.

Organizations should establish strong identity and access controls for AI models, services and supporting infrastructure, Tufts adds. “Many AI-related breaches stem from misconfigured access permissions or unmanaged APIs. Securing the AI supply chain is also critical, which means validating third-party models, plug-ins and dependencies before deploying them into operational environments. Organizations should also maintain comprehensive visibility into all connected assets and communications within their OT networks, so security teams can detect abnormal behavior or unexpected interactions involving AI-enabled systems.”

Tufts notes that protecting industrial environments ultimately requires a risk-based approach that prioritizes the operational processes and physical outcomes these systems support.

“If you are following ISA/IEC 62443 series of standards [1], then you should have little risk from embedded AI,” says Scott Reynolds, past president of the International Society of Automation (ISA). “The concept of Zones and Conduits from the standards is essentially setting up [and] only allowing communication between assets or the corporate network or the internet that you explicitly allow. This aligns to zero-trust concepts heavily discussed in the cybersecurity community these days. If you do not allow embedded AI to talk outside the zone, then it will be blocked.”

Leveraging standards to drive the risk posture and only allowing what is defined explicitly to be communicated between networks will block risks like this by default, Reynolds adds.

Safeguard Your OT Data from AI Incursions:

AI can be incredibly powerful at spotting early warning signals, but it also broadens the attack surface if it isn’t deployed carefully. A few practical safeguards make a big difference.

- First, govern access to both models and underlying data. That means using role-based access controls, multi-factor authentication and short-lived access tokens while continuously logging and alerting on unusual behavior, especially large or unexpected data transfers.

- Second, treat the AI supply chain as untrusted until proven otherwise. Every model, plug-in, API and firmware update should be validated, version controlled and ideally signed. This prevents compromised components from quietly entering the system.

- Third, keep AI runtimes isolated from core control networks. In OT, separation is safety: using one way data diodes or brokered interfaces ensures AI insights can flow in without exposing critical control segments to external risks.

- Fourth, protect data quality through provenance checks and monitoring for drift. A poisoned or manipulated dataset can silently skew AI recommendations in ways that operators might not detect.

- Finally, apply continuous monitoring tuned for OT networks — watching for changes in traffic volume, unusual destinations or anomalies tied to AI workloads.

In short, effective protection comes down to governance, segmentation and strong telemetry, paired with operators who know enough about AI to question outputs when something doesn’t look right.

—Shahzad Khan, Yokogawa global system consultant, Life Business Unit

AI Shouldn’t Change OT Security Management

“AI may be proliferating in the OT space, but it doesn’t fundamentally change how cybersecurity should be managed,” says Lee Coffey, Rockwell Automation strategic marketing manager - CPG. It’s one of many technologies expanding the attack surface as OT environments continue to evolve.

To stay ahead of threats, organizations need a proactive and comprehensive security strategy, Coffey adds. Maintaining an asset inventory and conducting cyber risk assessments can help clarify overall risk posture. From there, layered detection and protection along with real-time monitoring of OT networks can help detect both known and emerging threats.

Rockwell Automation’s OT Security Operations Center provides 24/7 continuous surveillance and rapid detection of cyber threats in industrial environments. Photo courtesy of Rockwell Automation

“The IBM finding that 97% of organizations reporting AI-related breaches lacked proper access controls points to a foundational problem that OT security teams already know well,” says Lauren Blocker, Rockwell Automation cybersecurity sales executive. Connected systems are only as secure as the controls placed around them. That’s true whether the application in question involves AI or not.

In OT environments, securing any connected system starts with knowing exactly what’s on your network, Blocker adds. A list of IP addresses isn’t enough. OT teams need contextual visibility — firmware versions, software inventory, user accounts, privilege levels and configuration states across every asset.

From there, OT security teams can manage user accounts and permissions, disable inactive accounts, enforce password policies and apply least-privilege access controls so that no account has more access than it actually needs.

Change detection is also important, as unexpected modifications to configurations, process variables or privilege levels — even when made through authenticated accounts — can signal a compromised system. By correlating these changes with data from firewalls, network intrusion detection systems and endpoint logs, OT security teams can gain the context needed to distinguish between normal maintenance and a real security threat, Blocker says.

OT Cybersecurity Maturity

Here is a great exercise for determining your OT cyber security maturity:

1. Can you draw the network architecture for your OT environment?

2. Can you identify machine-to-machine communications on that drawing?

3. Can you identify user-to-machine communications on that drawing?

4. Are there external/VPN connections into that network?

a. Who has access to this connection?

b. What devices can they reach with this connection?

c. Over what ports and protocols?

5. Can you audit connectivity from local and remote users in this environment?

6. Can you audit changes in this environment?

7. What controls do you have for restricting a user’s access in this environment?

8. Are users sharing credentials in this environment?

9. Can users/devices move east/west in this environment?

10. Can users/devices move north/south in this environment?

—Mike Carr, field CTO, Xona Systems

AI and Your Data

Unfortunately for many data breaches involving AI, there is a human component that can mistakenly give access to protected data, says Matt Malone, Yokogawa OT cybersecurity consultant. “Some of these instances can be reduced through regular awareness training. The remaining risk will need to be mitigated through increased access control and monitoring practices. Continuous monitoring systems can be tuned to alert SOC (security operations center) analysts for large data transfers as well as bandwidth anomalies.”

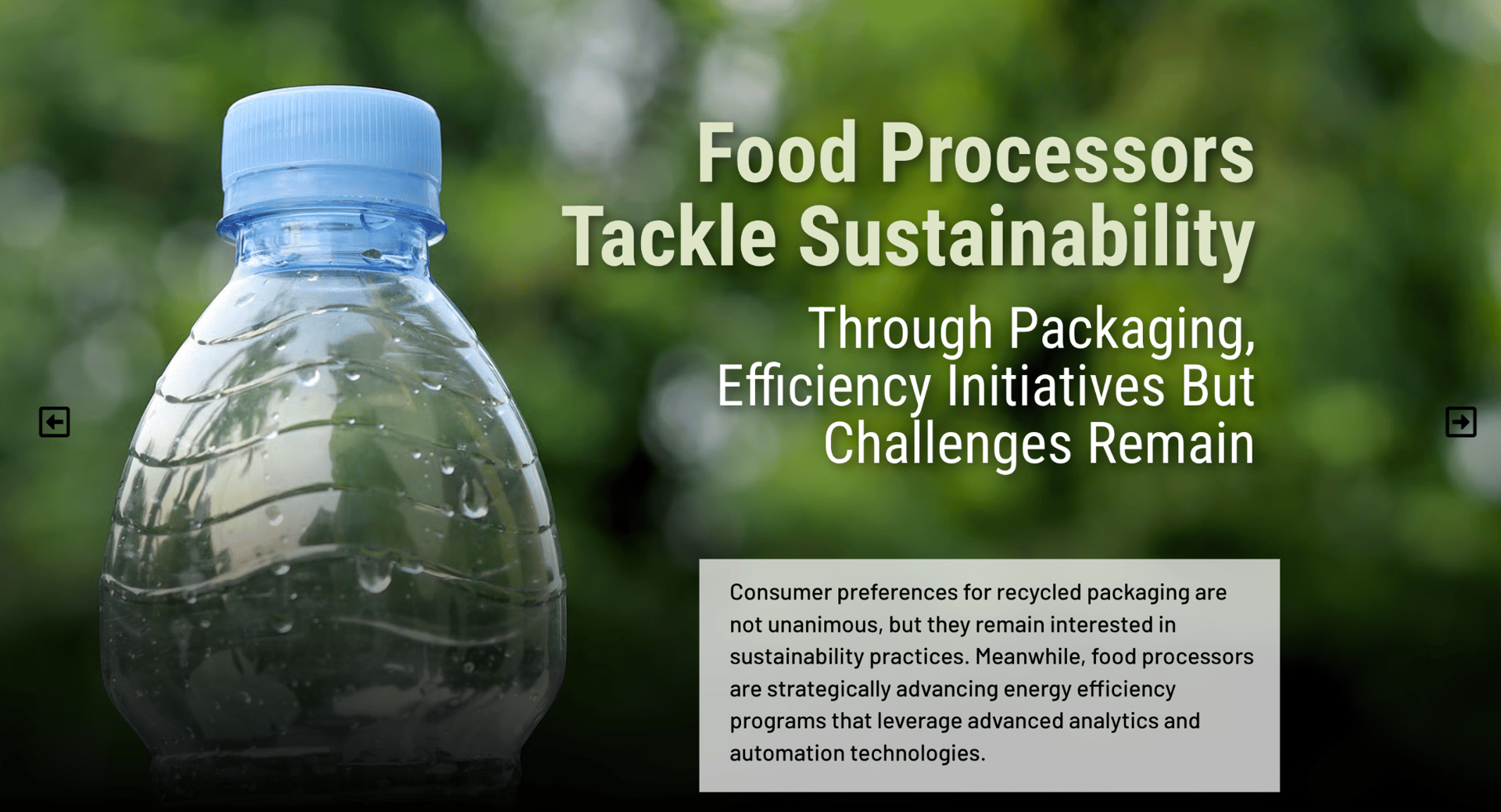

Having a strong model context protocol (MCP) architecture is important everywhere, but doubly so in OT, says Mike Carr, Xona Systems field CTO. Ensure you fully understand both the data that AI tools have access to, and the devices and scope of control to systems and accounts. Organizations should take the time to ensure that their AI tools are isolated from direct access to production manufacturing assets and include human approval in the implementation process of any changes recommended by AI for critical infrastructure.

Xona’s Protocol Break allows users to interact with critical systems without exposing those systems to untrusted devices, eliminating malware attack vectors. Image courtesy of Xona Systems

Long-standing automation software providers understand the required isolation but also know the benefits that AI brings to the table in developing HMI/SCADA applications — for example, GENESIS software from Mitsubishi Electric Iconics Digital Solutions. According to Roy Kok, digital product marketing manager: “Since our automation systems are largely disconnected from the internet for cybersecurity reasons, we will see the initial impact in AI solutions that improve configuration and system integration productivity… GENESIS is being enhanced with an AI assistant for graphic symbol generation. While an extensive library of symbols already exists, users will be able to ask AI to generate new ones or modify existing ones. These are not just visual elements; they are smart symbols with built-in dynamics for scaling, alarm levels, color changes and actions. This effectively creates an unlimited HMI/SCADA symbol library.”

Kok points out two other benefits that AI will bring to automation software. First, if automation software provides APIs, and GENESIS is particularly strong in this area with PowerShell, an AI assistant can connect to those APIs to build applications automatically based on prompts. This improves system integration efficiency, and with the right cybersecurity controls, may also enable systems to detect new equipment on the network and configure themselves automatically.

Second, Kok says there is a natural shift toward applying AI to on-premises applications in a safe and secure manner. AI will be available in two forms for on-site use: one based on a fully contained LLM (large language model) leveraging APIs, and another based on more purpose-built functionality similar to machine learning, using advanced algorithms to perform specific functions. “GENESIS is currently being prototyped with machine learning capabilities for advanced fault detection,” Kok adds. “While alarms require operator input for configuration and management, machine learning can continuously monitor variables and equipment, providing more refined and predictive alerts.”

“Our objective is to deliver the right AI Assistant functionality at the right time to meet market needs,” Kok says. “Many demonstrations today focus on the ‘art of the possible,’ but those are not always practical for real-world applications. When functionality is delivered, it must be easy to learn, apply and support.”

OT Cyber Hygiene in 20 Checks

Network & Identity

- Zones/Conduits per ISA/IEC 62443

- MFA for remote access, just-in-time (JIT) access/just enough administration (JEA) for admins

- No direct internet from OT; egress filtering

- App whitelisting on HMIs/servers

- Rotate and vault credentials; kill shared accounts

Assets & Hardening

- Authoritative asset inventory (hardware, firmware, software)

- Baseline configs; disable unused services/ports

- Vendor approved patch windows; rollback plan

- Secure logging on switches, firewalls, servers

- Time sync and signed configs

Monitoring & Response

- Passive OT network monitoring/deep packet inspection (DPI)

- Alerting on unusual egress/large transfers

- Incident playbooks aligned to plant roles

- Gold image recovery for HMIs/servers

- Offline, tested backups (projects, recipes, historian)

People & Process

- Quarterly awareness and phishing drills

- Contractor/vendor access policies; session recording

- AI governance for plant data and models

- Annual risk assessment and penetration testing

- Post incident reviews mapped to control improvements

Source: Yokogawa

The Shadow Knows…

In the old-time radio show, Detective Lamont Cranston, aka “The Shadow,” used his hypnotic powers to fight crime by peering into the hearts of men. But “shadow AI” is not a desirable function in your OT system. In the 2025 IBM study, 20% of respondents said they suffered a data breach due to security incidents involving “shadow AI.” Shadow AI refers to the unauthorized, unmonitored use of AI tools (like ChatGPT, Midjourney or plug-ins) by employees for business tasks without IT/OT department approval. Shadow AI creates significant security risks, including data leakage of proprietary information (think recipes, financial data), compliance violations and increased operational vulnerabilities. In fact the IBM study showed 63% of companies lack AI governance policies.

Simply put, according to Rockwell’s Blocker, “Shadow AI is a specific version of a problem OT security teams already deal with: unauthorized software running where it shouldn’t. The same practices that catch unauthorized applications in an OT environment apply here.”

“Minimizing these incidents starts with establishing clear AI governance policies and acceptable use guidelines, so employees understand what tools are permitted and how they should be used,” says Claroty’s Tufts. Organizations should also implement monitoring and access controls that provide visibility into where AI tools are being used and what data may be interacting with them.

“In many ways, shadow AI can be somewhat easier to manage in OT environments because those networks are typically more controlled and segmented,” Tufts adds. “Industrial environments already rely on strict change management processes and limited connectivity to maintain uptime and safety.”

For the OT side to use public AI tools, there would be a need to have your OT space connected directly to the internet, says ISA’s Reynolds. “This is bad practice, and you should never allow internet DNS to be translated from your OT network.” Ideally, you should not talk to the internet at all from your OT environment, he adds. “There are exceptions to this, but they need to be heavily monitored and controlled.”

“If you have this in place, the risk of shadow AI is that shadow IT is being used in your OT environment,” Reynolds says. “There should already be strong baselines, governance and monitoring for shadow IT on your OT network, and if that is the case, shadow AI should not be an issue on the OT network.”

Rockwell Automation launched a new Security Operations Center in Singapore on Feb. 9, 2026. The center is designed to provide 24/7 Managed Detection and Response (MDR) services to protect industrial OT networks across the Asia-Pacific region. Image courtesy of Rockwell Automation

“Human error remains one of the leading causes of data breaches in industrial environments, and shadow AI is a clear example of how employees can unintentionally introduce risks,” says Rockwell’s Coffey.

“Minimizing these risks starts with strong governance,” Coffey adds. “Organizations should define how AI tools can be used and what data can be fed into them. Security training should also be provided regularly and refreshed to address the latest risks, such as shadow AI. Organizations should also regularly review employee access to sensitive systems and information.”

Training users on what is allowed and prohibited along with the reasoning behind those policies helps to train behavior, which will always win against data loss prevention (DLP) tools trying to play catch up, says Xona’s Carr. “Hosting approved AI tools with strong MCP (model context protocol) will help, but if your MCP doesn’t give users a clear answer they may be likely to go to other tools outside of the organization’s control. Trying to control access to non-approved tools can also help [by] using firewall rules or context-sensitive web content filtering.”

“One of the most effective approaches to minimizing shadow AI is maintaining a software bill of materials (SBOM),” Blocker says. “It gives security teams a complete picture of every software application deployed across the OT environment, including unauthorized tools and ties that inventory to the criticality of the assets those tools are running on. If someone has introduced an unapproved AI application on a system connected to your production network, a well-maintained SBOM will surface it.”

Application allow-listing adds another layer, Blocker says. By defining exactly which applications are permitted to run on a given system, you block unauthorized tools outright rather than trying to detect them after the fact.

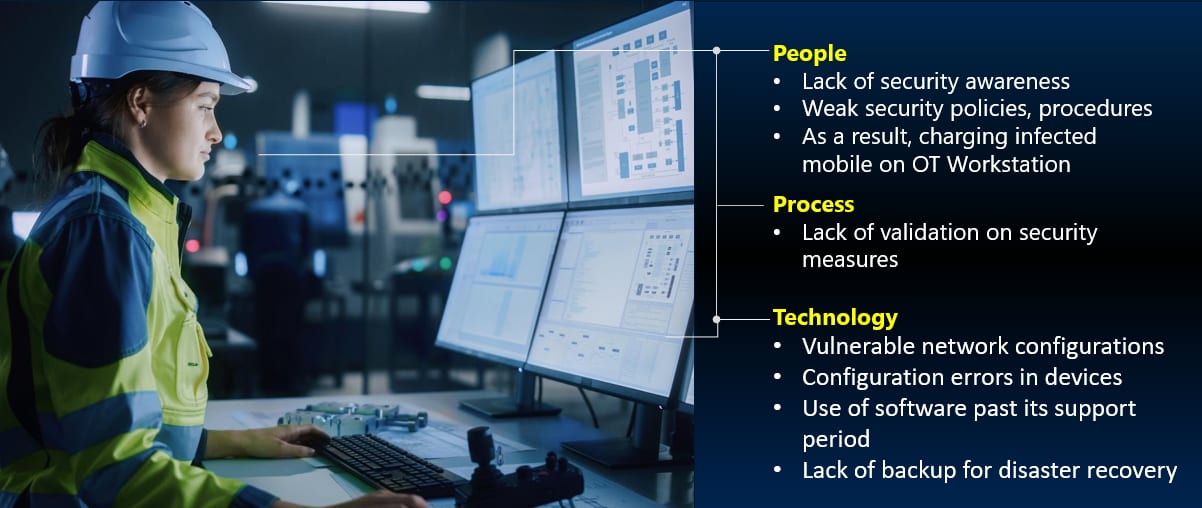

Cybersecurity risks must be managed by a combination of the correct hardware, software and procedures — as well as by people thoroughly acquainted with cybersecurity procedures. Image courtesy of Yokogawa

Shahzad Khan, Yokogawa global system consultant, Life Business Unit, sums it up succinctly:

People and policy: Clear AI governance (what’s allowed, where data may flow), recurring awareness training and periodic tests. Prohibit exporting recipes, batch parameters or P&IDs to external tools.

Controls and containment: No direct internet access from OT; application whitelisting on HMIs/engineer stations; domain controls to block unapproved AI sites and ports; and approved, internally hosted AI tools where a business case exists.

With governance plus technical guardrails, shadow AI becomes the exception, not the norm.

Weak Links in OT Cybersecurity

As control system suppliers introduce newer equipment, there is a growing industry-wide requirement for vendors to ensure their devices meet cybersecurity compliance standards. This was not a consideration with older technology, as systems were largely offline and isolated. However, with the widespread adoption of Ethernet networks, OT environments have changed significantly. They are more connected than ever and demand a stronger, more comprehensive security infrastructure.

Legacy equipment, by contrast, was designed with little to no built-in security features, making it highly vulnerable when exposed to modern networks or the internet. This concern extends beyond PCs and PLCs to any device that communicates within the OT environment. It’s essential that organizations stay current with the latest technology and maintain a forward-looking plan to modernize their systems wherever possible, ensuring access to the newest security features, patches and compliance capabilities. At Jordan Engineering, we have modernized a range of systems to meet the specified needs of each client while minimizing the impact to production through extensive planning, execution and support.

When selecting new equipment, choosing devices that adhere to established industry standards is critical, even in environments where users may not consistently follow cybersecurity best practices. Standards-compliant devices can effectively enforce good security behavior by design, providing a baseline level of protection regardless of user habits. Combined with thoughtful network segmentation, organizations can further reduce risk by ensuring that process lines and critical systems are isolated from networks that have no legitimate reason to access them.

In our experience working across industrial environments, training is equally important. Helping users understand the real-world consequences of cybersecurity threats through concrete examples of past incidents builds awareness and a culture of vigilance. As teams develop and consistently follow company-defined standards, they become more attuned to potential risks in their day-to-day work. Data is one of the most valuable assets in today’s world, and protecting it is a shared responsibility that starts with education, the right tools and a commitment to continuous improvement.

—Marloc Harris, cybersecurity ambassador, Jordan Engineering, Inc., a Control System Integrators Association Member

Strategy Failures — or Just Plain Cybersecurity Fatigue

The 2025 IBM study reports a distressing number: There was a significant reduction in the number of companies that plan to invest in enterprise security following a breach, 49% — compared to 63% the year before. Thus, less than half intend to invest in a security plan to focus on AI-driven security solutions or services, such as threat detection and response, incident response planning and testing, and data security or protection tools. Why?

There are a few factors that likely contribute to this trend, says Claroty’s Tufts. “Many organizations are operating under tight budgets and competing priorities, so even after a security incident, leadership may hesitate to significantly increase spending without a clear understanding of the return on investment. In some cases, companies may also feel frustrated if prior security investments didn’t prevent a breach, which can create skepticism about whether additional tools will meaningfully reduce risk.”

“We see three realities: breach fatigue, budget drag during recovery and unclear ROI from legacy tools that underdelivered,” says Yokogawa’s Khan. OT is different because downtime hits safety, quality and yield — so leaders tend to invest once they connect cybersecurity to business risk and resilience. The framing that resonates is:

- Cybersecurity is risk management, not a one-off project.

- Aim for cyber resilience: Prevent what you can, detect fast, contain reliably and recover with tested playbooks

- When plants tie security metrics to batch success, overall equipment effectiveness (OEE) and recall avoidance, funding becomes a continuity decision — not an optional expense.

In OT environments, the dynamic can be different, Tufts adds. “Systems like SCADA and DCS support critical physical processes, so the potential consequences of a cyber incident extend beyond data loss to operational disruption, safety concerns, or the loss of product custodianship. Because of that, organizations operating critical infrastructure often view cybersecurity investments through a resilience and risk-reduction lens rather than purely as a technology upgrade.”

“I believe there are two opposing forces involved here,” says Xona’s Carr. “On one hand, budgets are being squeezed in many places, leading to reviews of current spending and oftentimes cutting budgets instead of expanding them. But the flip side of this is that OT environments are typically profit centers. OT does have an advantage here, as the business can typically tell you to the penny exactly what an outage costs per hour. It is easier to justify an ounce of prevention versus a pound of outage when you know exactly what both cost.”

A security breach is simply an observation to reassess your risk posture, says ISA’s Reynolds. “In OT, you should review the security zones and the security levels from ISA/IEC 62443. Did the breach impact the security level to an expected amount based on the complexity of the attack? Did your most critical assets get impacted? What controls did not protect as expected? What additional layers of controls should be put in place to address the risk? The solutions could be AI-based tools or not to mitigate the risk.”

Don’t Become Lax

Many vulnerabilities result from basic errors rather than advanced threats, says Mitsubishi’s Kok. When a breach occurs, organizations often address the immediate issue and improve internal awareness, which can reduce the perceived need for additional investment.

“A renewed focus on existing knowledge and best practices often leads organizations to believe they have already addressed the root cause,” Kok adds. “However, this can create a false sense of security.”

Resource:

[1] “ISA/IEC 62443 Series of Standards,” International Society of Automation website FE